[ad_1]

One of the most significant examples of this third, most intriguing approach comes from the lab of Diana Reiss, an animal-cognition researcher at Hunter College specializing in dolphins. Throughout the ’80s and early ’90s, she and Brenda McCowan, another animal researcher now at UC Davis, conducted a fascinating experiment studying the process of vocal learning in bottlenose dolphins.

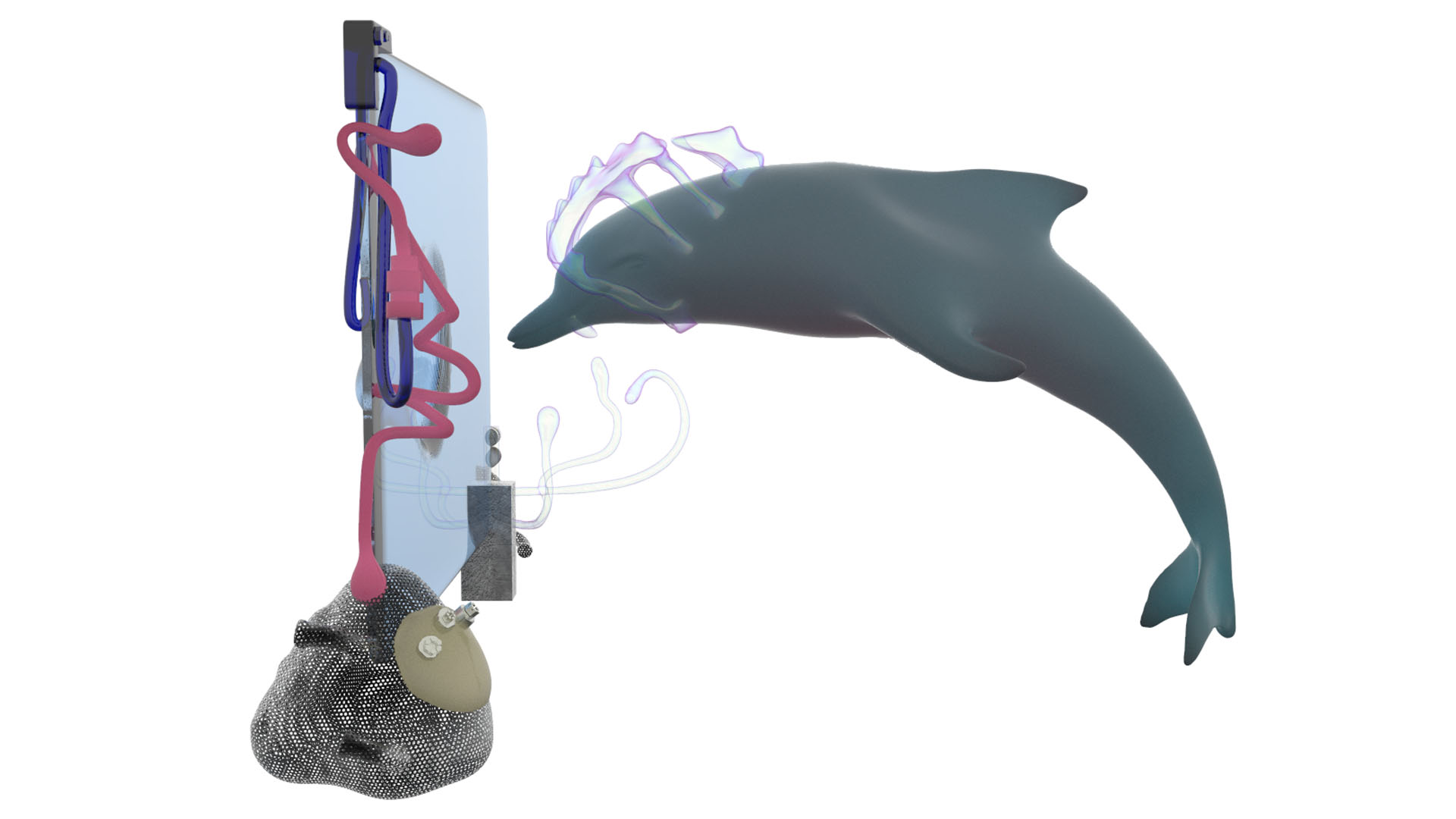

The study aimed to test how well the animals could pick up, imitate and modify vocal signals, a suite of behaviors referred to as “vocal learning.” However, instead of simply speaking to the dolphins in human language, the researchers built an underwater keyboard system that paired abstract symbols with both computer-generated whistles and desired objects and activities including a ball, a fish, a ring toy, a rub and the like. Each sound was designed to land within the animals’ vocal range while remaining distinct from their normal vocalizations. Upon a striking a key, the appropriate whistle would play in the tank, while at the same time a human “agent,” positioned outside the dolphin’s enclosure, was informed which button the animal had pressed via a digital speech synthesizer and would dispense the proper prize.

What sets Reiss’ experiment apart from so many others is that the dolphins she studied (two mothers and their 11-month-old male offspring) were not explicitly trained to use the keyboard. Instead, she and McCowan relied on the animals’ natural curiosity to motivate them to explore and learn the contingencies of the keyboard for themselves. Rather than prescribe a certain kind of success (the reproduction of human language, for example), their aim was simply to observe how the dolphins interacted with the keyboard and were able to use the whistles/symbols in connection to the physical objects. This “free choice” approach has been central to Reiss’ work throughout her career.

“Familiarity breeds interpretation,” Reiss told me in a recent interview about her studies. She was quoting Heini Hediger, a 20th-century Swiss scientist often referred to as “the father of zoo biology” who pioneered an observation-based approach to animal husbandry. “The more we interact with other humans and non-human species, the more we become familiar with them,” she continued, “and this familiarity increases how we interpret what the other is doing.”

The dolphins under study quickly learned that when they pressed a given symbol with their rostrum (beak), they heard a certain sound and received a toy or other desired activity in return. This simple system enabled the dolphins to request from the human interlocutors specific items or actions they otherwise were not able to ask for; it’s one of the best early examples of animal-translation tech in action.

More significant is what Reiss and McCowan found later on. After only a handful of exposures to the keyboard, the young male dolphins began to spontaneously mimic the computer-generated whistles on their own. Sonogram analyses of these whistles revealed a process not dissimilar to human babies’ babbling: The dolphins would first mimic one part of the artificial whistle, then the other, before finally stringing the entire sequence together into a reasonable facsimile of the original. Statistical studies of the calls also showed the dolphins appeared to use these whistles in appropriate contexts (such as while playing with the ball) and would occasionally produce these whistles before striking the appropriate key. Again, the dolphins were not trained to make these novel sounds; they simply picked them up in the process of exploring their new keyboard “toy.”

During the experiment’s second year, the dolphins began to combine the “ring” and “ball” sounds into a single into a single “ring-ball” whistle, an utterance not produced by the machine. This entirely new whistle was also used in pertinent contexts (while playing a game involving both items), suggesting that the dolphins had a kind of functional understanding of what each word-whistle actually meant.

It’s tempting to conclude from these findings that Reiss and McCowan taught the dolphins to use a kind of referential language. However, the researchers caution in their paper that their account was only “a descriptive approach to the dolphins’ behavior” and that the cetaceans may indeed be responding to a kind of flexible operant conditioning.

Still, the implications for the study of dolphin behavior and vocal learning are clear. In our interview, Reiss took a wide view of how one may read into animal behavior.

“Our interpretations may not always be correct, but our interactions provide context for decoding the signals, the rich mixture of multimodal signaling that involves both vocal and nonvocal [communication],” she told me. These include “gestures, postures, movements, the way one uses the voice other than the words or signals themselves.”

Today, Reiss is reprising her keyboard work in attempt to go even further into the dolphin’s psychology –– this time, using touch screens at the National Aquarium in Baltimore. So far, the dolphins there have learned how to play an underwater version of Whack-a-Mole that uses digital fish.

But a dolphin pressing a button or even whistling the code for “ball” is perhaps not so different from a cat at a closed door mewing to be let out. What about directly translating the “native tongues” of animals, the semi-secret codes they use to speak to one another?

“As scientists learn more about the functional use of signals in other animals, and we get better and better approaches to decipher body movements and patterns of interactions, we may be able to create more translational devices,” she told me. But, like the other specialists I spoke to for this piece, she cautions that much more research is needed.

Others in the field, however, believe we’re already on the cusp of such advances. Con Slobodchikoff, a professor emeritus of biology at Northern Arizona University, has published several papers suggesting that prairie dogs, America’s favorite burrowing rodent, can produce specialized alarm calls to warn their compatriots about various threats.

In a series of experiments with colonies of Gunnison’s prairie dogs, Slobodchikoff and his lab have shown that the animals appear to have developed distinguishable calls for hawks, coyotes, domestic dogs, and humans. The different calls appear to also produce distinct responses, from running into a burrow to standing in place, watching the predator while preparing to flee. In fact, Slobodchikoff has gone so far as to refer to these calls as a “Rosetta Stone” for animal communication. His work joins a growing body of research into functionally referential signals, sounds made by a variety of critters including vervet monkeys, meerkats and chickens that appear to serve as specific, semantic warnings that prompt specific actions. These calls are usually made in response to various predators, but may also be uttered while searching for food.

In a recent conversation, Slobodchikoff told me that his translation work is part of a greater mission to extend our understanding of the complex societies of animals that exist all around us. “My goal is to help people develop meaningful partnerships with animals,” he told me, “instead of thinking of animals as unfeeling, unthinking, brutes that run on a program of instinct.” It’s worth remembering that prairie dogs are considered vermin in the areas of the American Southwest they inhabit and are often shot for sport or poisoned en masse as pest control.

By analyzing the sonograms of prairie-dog calls made in various contexts and reproducing them in playback scenarios, Slobodchikof and his team have also found that these calls and the manner in which they are used seem to contain a plethora of information about individual threats –– including color, speed, direction of approach and shape. Each call is also made up of several smaller units of sound that Slobodchikoff has compared to nouns, adjectives, and verbs.

It’s a far from a simple cry of “coyote!” or “hawk!” In fact, the researcher suggests that these vocalizations are full of vital descriptions that are at times astoundingly plastic. In one experiment, Slobodchikof found that the rodents produced distinct calls for humans wearing blue versus yellow shirts, a level of specificity that seems to go beyond simple threat avoidance. In another, his team rigged a pulley system that sent black cardboard shapes (a circle, triangle, large square, and small square) through a prairie-dog colony. Incredibly, each of the shapes was met with an acoustically distinct alarm call, suggesting that the animals are able to construct novel calls essentially on the fly in response to totally unknown (and in this case entirely unnatural) stimuli.

As with all good research, Slobodchikoff’s prompts yet more questions: If prairie dogs are capable of this kind of semantic complexity, can other animals be much different?

Slobodchikoff has recently founded Zoolingua, a company that, according to its website, is “working to develop technologies that will decode a dog’s vocalizations, facial expressions and actions and then tell the human user what the dog is trying to say.” The researcher cites his experience as an animal researcher and behavioral consultant as an inspiration behind his decision to move into consumer tech.

“One of the consistent themes I saw was that people misinterpreted what their pets were trying to communicate to them,” he told me, mentioning the story of a former client. “[The man] takes me over to his dog, who is standing in a corner. He towers over the dog, growls ‘good dog’ in a low-pitched voice and extends his hand above the dog’s head. The dog bares his teeth and growls. The man says to me, ‘See, my dog is aggressive and wants to bite me.'” According to Slobodchikoff, “With a dog-translation device, the device could say to the man, ‘Back off, you’re scaring me, give me some space.'”

One can’t help but wonder if the man in this anecdote couldn’t have figured out what was going on without the help of a specialist, or a high-tech device. But there’s also the question of whether or not dogs can really “talk” like we do in the first place.

Slobodchikoff was cagey about revealing the precise technology that powers Zoolingua in our interviews, citing “proprietary information.” But an article by Bahar Gholipour at NBC News reports that “Slobodchikoff is amassing thousands of videos of dogs showing various barks and body movements,” and that “he’ll use the videos to teach an AI algorithm about these communication signals.” The Zoolingua team will likely still have to label and interpret what each movement means initially. As the system becomes more refined, however, there may be opportunities for the program to discover ever-subtler indications of dogs’ moods and desires.

In an interview with Alexandra Horowitz, a leading canine-cognition scientist at Barnard College and the author of Inside of a Dog: What Dogs See, Smell, and Know, the researcher was unequivocal in her account of canines’ abilities to express themselves:

“Dogs talk; they communicate, with dogs and with people, and sometimes with inanimate objects or things that go bump in the night. They talk with vocalizations, to some degree, but also with body posture, tail height and movement, facial (ear, eye and mouth) expressions, and with behavior. Most significantly, they communicate with olfactory cues — much of which is presumably lost on humans, as we are not especially tuned in to information in odors.”

Horowitz went on to reference the work of Marc Bekoff and others on play bows, the head-down, butt-in-the-air posture that dogs assume to signal their intent to chase or wrestle, as a well-studied example of dogs’ use of body language in their communication. She also referred me to the work of the Family Dog Project, a Budapest-based team of canine researchers founded in 1994 by Vilmos Csányi, Ádám Miklósi and József Topál that explores various aspects of dog communication and cognition, including their use of context-specific vocalizations.

Over the past two decades, Family Dog Project has found that domestic dogs produce at least three acoustically distinct growls (one to deter threatening strangers, one to warn against touching their food, and one used in play situations) as well as six discriminable barks, including those emitted when left alone, during a simulated fight scenario, in the presence of a stranger, in response to a ball/toy, while playing with their owner and in response to being prompted to go on a walk. Family Dog Project scientists have also begun using machine-learning algorithms to categorize these various barks, opening the door to the possibility of creating an automated dog-decoder that can translate behavior into human language.

“Machine-learning makes it exceptionally useful in the analysis of behavior: patterns of movement, sounds, gaze, and more, thus far unnoticed by human observers, can be picked up and amplified.”

In the research team’s 2007 paper, “Classification of dog barks: a machine learning approach,” Csaba Molnár, et al. write that “the algorithm could categorize the barks according to their recorded situation with an efficiency of 43%,” an accuracy well above chance and comparable to that of many human analysts. In terms of data, the technique they’re using is also fairly standard for machine-learning systems: First, the program is “trained” on a set of labeled samples (in this case, audio files of the barks), from which it derives sets of increasingly accurate descriptors. When the efficiency of these descriptors in identifying the different contexts is sufficiently high, the system then generates a set of classifiers, which it can then use to label unknown samples.

As the experimenters note in their report, this process has the advantage of eliminating some sources of human bias. The system’s criteria for classifying the various barks can be arrived at independently during the training period, and isn’t necessarily the same as those of its flesh-and-blood counterparts, meaning that advanced machine-learning algorithms can pick up patterns in the data invisible to most people.

Molnár et al. write that “no information linked with the particular problem of bark classification was included at any stage of the process,” explaining that this technique “limits the number of potential preconceptions that researchers may introduce into the construction of good descriptors of the data and permits discovery of structure that they may have otherwise missed.” This feature of machine-learning makes it exceptionally useful in the analysis of behavior: patterns of movement, sounds, gaze, and more, thus far unnoticed by human observers, can be picked up and amplified by machine-learning technology.

It’s precisely this capacity to automate what to this point has been a painstaking effort (mostly by unpaid graduate students) that could make commercial animal translators a reality in the near future. If the tech is going to work as advertised, machine learning seems all but necessary, and many animal-translator projects I investigated are racing to harness it.

Source link

Tech News code

Tech News code